Feature Flags for AI Models: Progressive Rollout Patterns That Reduce Production Risk

You would never ship a new microservice to 100% of traffic without a canary. So why are you deploying model updates to your entire user base simultaneously? Feature flags for AI are different from feature flags for code -- and most teams are learning that distinction in production.

The Model Deploy Problem Nobody Budgeted For

In traditional software, a feature flag is straightforward. You wrap new code in a conditional, route a percentage of users to the new path, measure error rates and latency, and gradually increase traffic. If something breaks, you kill the flag. The blast radius is contained. The rollback is instant.

AI model deployments look nothing like this.

A model update is not a code change. It is a behavioral change that manifests probabilistically across millions of possible inputs. A model that performs brilliantly on your eval suite can fail catastrophically on a distribution of real-world queries you never anticipated. A model that improves average quality by 15% might degrade quality for a specific customer segment by 40%. And unlike a code bug that produces a stack trace, a model regression produces outputs that look plausible but are subtly wrong.

This is why the standard feature flag playbook -- percent-based traffic splitting with error rate monitoring -- is necessary but wildly insufficient for AI systems. You need feature flags that understand the unique failure modes of probabilistic systems.

Why Standard Canary Deployments Fail for Models

Consider a standard canary deployment: route 5% of traffic to the new model, monitor error rates for an hour, promote to 25% if healthy. This works when failure is binary and immediate. A 500 error. A timeout. A crash.

Model failures are rarely binary. They are distributional. The new model might handle 95% of queries better and 5% of queries catastrophically worse. If your canary population is randomly sampled, you will see a net improvement in aggregate metrics while a subset of users experiences severe degradation. Your monitoring says "promote." Your users say "something is wrong but I cannot articulate what."

The observability challenges in AI systems compound this problem. Traditional APM tells you whether the system is up. It does not tell you whether the system is right. Model quality is a spectrum, not a boolean, and degradation can be invisible to infrastructure metrics while being obvious to users.

Progressive Rollout Patterns for AI

Pattern 1: Segment-Aware Routing

Instead of random traffic splitting, route model versions based on user segments that represent distinct input distributions. Enterprise customers with complex queries get the new model last, not first. High-value workflows stay on the proven model until the new one has been validated against simpler use cases.

This requires understanding your query distribution and mapping segments to risk profiles. A customer support chatbot might segment by: simple FAQ queries (low risk, deploy first), multi-turn conversations (medium risk, deploy second), and escalation-path interactions (high risk, deploy last).

Pattern 2: Shadow Scoring

Run the new model in shadow mode -- it processes every request and produces an output, but only the old model's output is served to users. Compare outputs using automated eval metrics and human review sampling. This gives you distributional coverage that canary deployments cannot match because you see the new model's behavior on 100% of real traffic without any user impact.

The cost is that you pay for inference twice during the shadow period. For teams already struggling with LLM cost engineering, this feels expensive. But the cost of a bad model deployment -- user trust erosion, data contamination from feedback loops, emergency rollbacks that lose days of iteration -- dwarfs the inference cost of a shadow period.

Pattern 3: Confidence-Gated Routing

Route requests to the new model only when its confidence score exceeds a threshold. Low-confidence requests fall back to the old model. This creates a natural progressive rollout: the new model handles easy cases first and gradually takes on harder cases as you lower the confidence threshold based on observed performance.

This pattern is elegant but requires that your model produces calibrated confidence scores -- which many LLM-based systems do not natively provide. You may need a separate calibration model or heuristic confidence estimation based on output characteristics. The guardrails infrastructure you have already built for safety can often be repurposed for confidence-gated routing.

Pattern 4: Eval-Gated Promotion

Instead of time-based promotion ("it has been stable for 2 hours, promote to 50%"), gate promotion on eval results. Define a suite of automated evaluations that must pass at each traffic percentage before promotion proceeds. These evals should cover not just average quality but tail behavior: worst-case outputs, specific failure modes from previous incidents, and regression tests from known edge cases.

This is eval-driven development applied to deployment rather than development. The same eval infrastructure you use to validate a model before deployment should run continuously during deployment to validate that production traffic matches your expectations.

The Rollback Problem Is Harder Than You Think

In code deployments, rollback means reverting to the previous version. In model deployments, rollback is complicated by state.

If the new model produced outputs that users interacted with -- saved responses, triggered downstream actions, generated data that was stored -- rolling back the model does not roll back those effects. A chatbot that gave wrong answers for 30 minutes created a trust deficit that persists after rollback. A recommendation system that showed irrelevant results for an hour trained users to ignore recommendations going forward.

Worse, if you are training on user feedback (RLHF, DPO, or any feedback loop), outputs from the bad model that received user interactions are now contaminating your training data. You need to tag all outputs with model version and be prepared to filter training data by deployment window.

This is why audit trail infrastructure is not just a compliance requirement -- it is an operational necessity. Every output must be traceable to the model version that produced it, and you need the ability to retroactively identify and quarantine outputs from failed deployments.

Building the Infrastructure

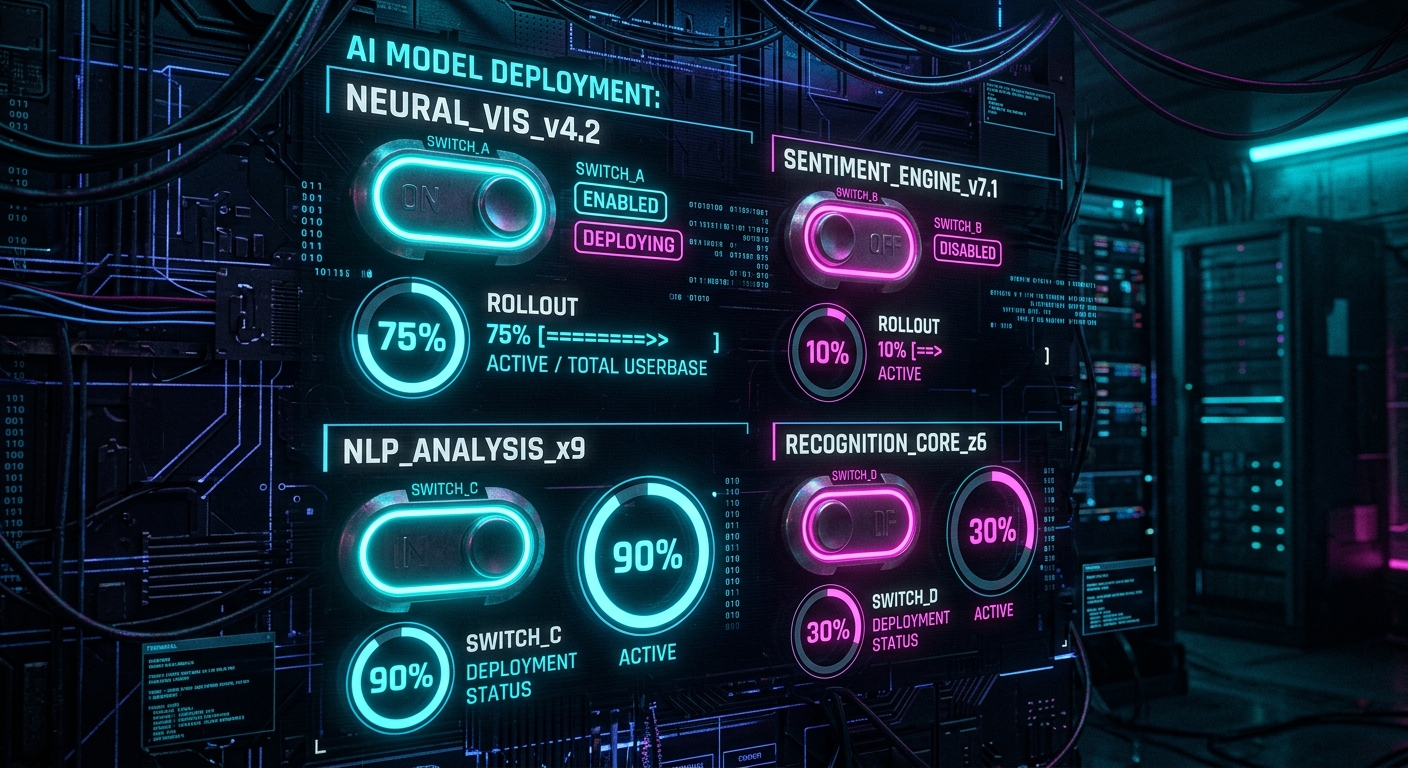

The minimum viable feature flag system for AI models requires:

- A routing layer that can split traffic by arbitrary predicates (user segment, query characteristics, confidence scores)

- A shadow execution mode that runs models without serving their outputs

- Automated eval pipelines that run continuously against production traffic samples

- Version-tagged output storage for rollback forensics

- Promotion gates that block traffic increases until quality thresholds are met

- Segment-level quality dashboards (not just aggregates)

Most teams build this incrementally after their first bad deployment. The smart ones build it before. The cost of the infrastructure is a fraction of the cost of a single model incident that reaches your entire user base.

The pattern is clear across production AI architecture: the teams shipping AI reliably are the ones who invested in deployment infrastructure as seriously as they invested in model quality. The model is not the product. The system around the model is the product. And feature flags are load-bearing infrastructure in that system.

Founder & Principal Architect

Ready to explore AI for your organization?

Schedule a free consultation to discuss your AI goals and challenges.

Book Free Consultation